On this article, you’ll be taught what small language fashions are, why they matter in 2026, and how one can use them successfully in actual manufacturing techniques.

Matters we are going to cowl embody:

- What defines small language fashions and the way they differ from massive language fashions.

- The price, latency, and privateness benefits driving SLM adoption.

- Sensible use circumstances and a transparent path to getting began.

Let’s get straight to it.

Introduction to Small Language Fashions: The Full Information for 2026

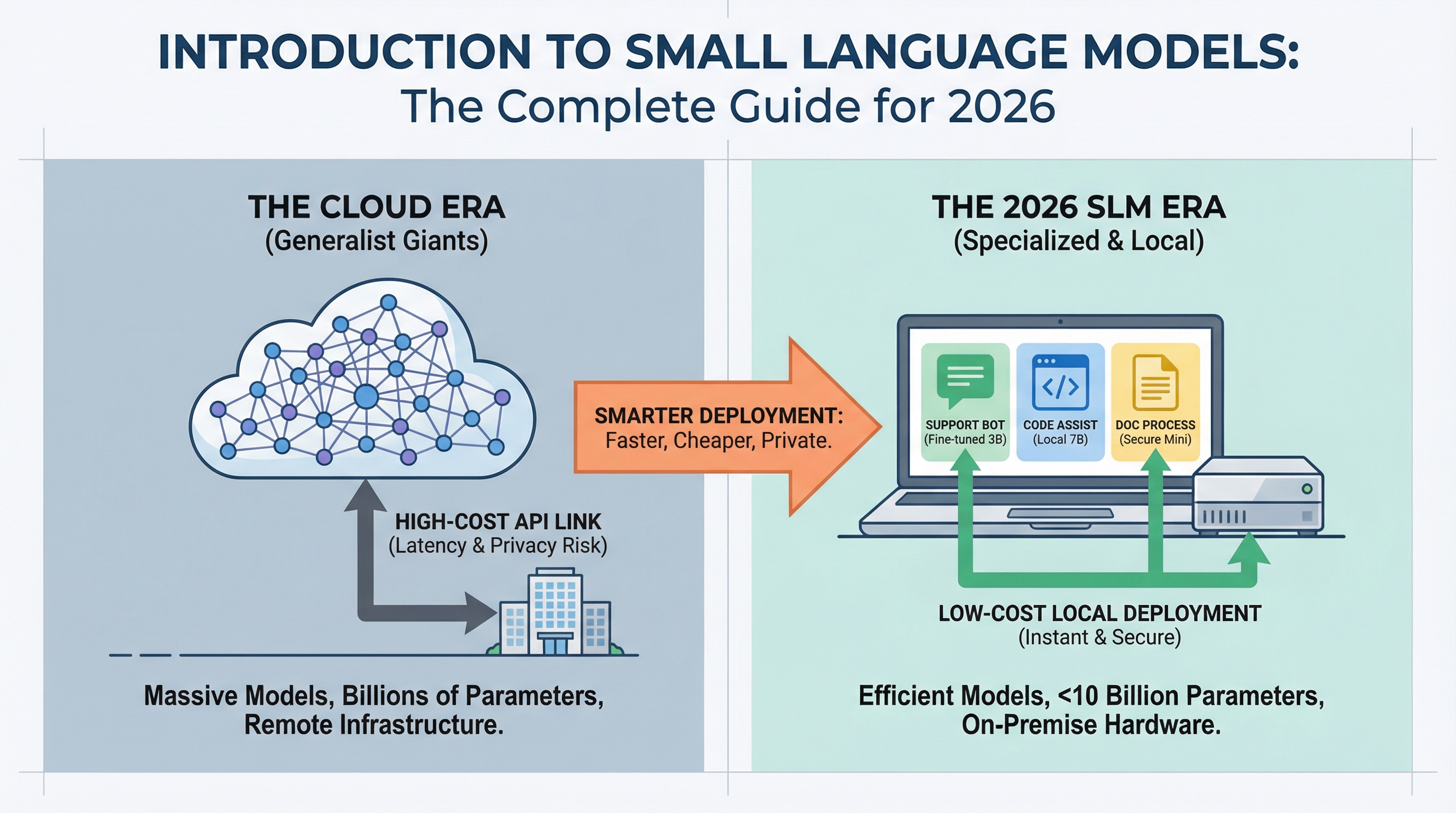

Picture by Writer

Introduction

AI deployment is altering. Whereas headlines deal with ever-larger language fashions breaking new benchmarks, manufacturing groups are discovering that smaller fashions can deal with most on a regular basis duties at a fraction of the price.

In the event you’ve deployed a chatbot, constructed a code assistant, or automated doc processing, you’ve in all probability paid for cloud API calls to fashions with tons of of billions of parameters. However most practitioners working in 2026 are discovering that for 80% of manufacturing use circumstances, a mannequin you possibly can run on a laptop computer works simply as properly and prices 95% much less. If you wish to bounce straight into hands-on choices, our information to the Top 7 Small Language Models You Can Run on a Laptop covers the perfect fashions out there at the moment and how one can get them operating domestically.

Small language fashions (SLMs) make this potential. This information covers what they’re, when to make use of them, and the way they’re altering the economics of AI deployment.

What Are Small Language Fashions?

Small language fashions are language fashions with fewer than 10 billion parameters, often starting from 1 billion to 7 billion.

Parameters are the “knobs and dials” inside a neural community. Every parameter is a numerical worth the mannequin makes use of to rework enter textual content into predictions about what comes subsequent. While you see “GPT-4 has over 1 trillion parameters,” meaning the mannequin has 1 trillion of those adjustable values working collectively to know and generate language. Extra parameters usually imply extra capability to be taught patterns, however in addition they imply extra computational energy, reminiscence, and price to run.

The dimensions distinction is critical. GPT-4 has over 1 trillion parameters, Claude Opus has tons of of billions, and even Llama 3.1 70B is taken into account “massive.” SLMs function at a totally completely different scale.

However “small” doesn’t imply “easy.” Trendy SLMs like Phi-3 Mini (3.8B parameters), Llama 3.2 3B, and Mistral 7B ship efficiency that rivals fashions 10× their measurement on many duties. The true distinction is specialization.

The place massive language fashions are educated to be generalists with broad data spanning each matter conceivable, SLMs excel when fine-tuned for particular domains. A 3B mannequin educated on buyer assist conversations will outperform GPT-4 in your particular assist queries whereas operating on {hardware} you already personal.

You Don’t Construct Them From Scratch

Adopting an SLM doesn’t imply constructing one from the bottom up. Even “small” fashions are far too advanced for people or small groups to coach from scratch. As a substitute, you obtain a pre-trained mannequin that already understands language, then train it your particular area by means of fine-tuning.

It’s like hiring an worker who already speaks English and coaching them in your firm’s procedures, moderately than educating a child to talk from delivery. The mannequin arrives with common language understanding in-built. You’re simply including specialised data.

You don’t want a group of PhD researchers or large computing clusters. You want a developer with Python abilities, some instance knowledge out of your area, and some hours of GPU time. The barrier to entry is far decrease than most individuals assume.

Why SLMs Matter in 2026

Three forces are driving SLM adoption: price, latency, and privateness.

Price: Cloud API pricing for big fashions runs $0.01 to $0.10 per 1,000 tokens. At scale, this provides up quick. A buyer assist system dealing with 100,000 queries per day can rack up $30,000+ month-to-month in API prices. An SLM operating on a single GPU server prices the identical {hardware} whether or not it processes 10,000 or 10 million queries. The economics flip fully.

Latency: While you name a cloud API, you’re ready for community round-trips plus inference time. SLMs operating domestically reply in 50 to 200 milliseconds. For functions like coding assistants or interactive chatbots, customers really feel this distinction instantly.

Privateness: Regulated industries (healthcare, finance, authorized) can’t ship delicate knowledge to exterior APIs. SLMs let these organizations deploy AI whereas retaining knowledge on-premise. No exterior API calls means no knowledge leaves your infrastructure.

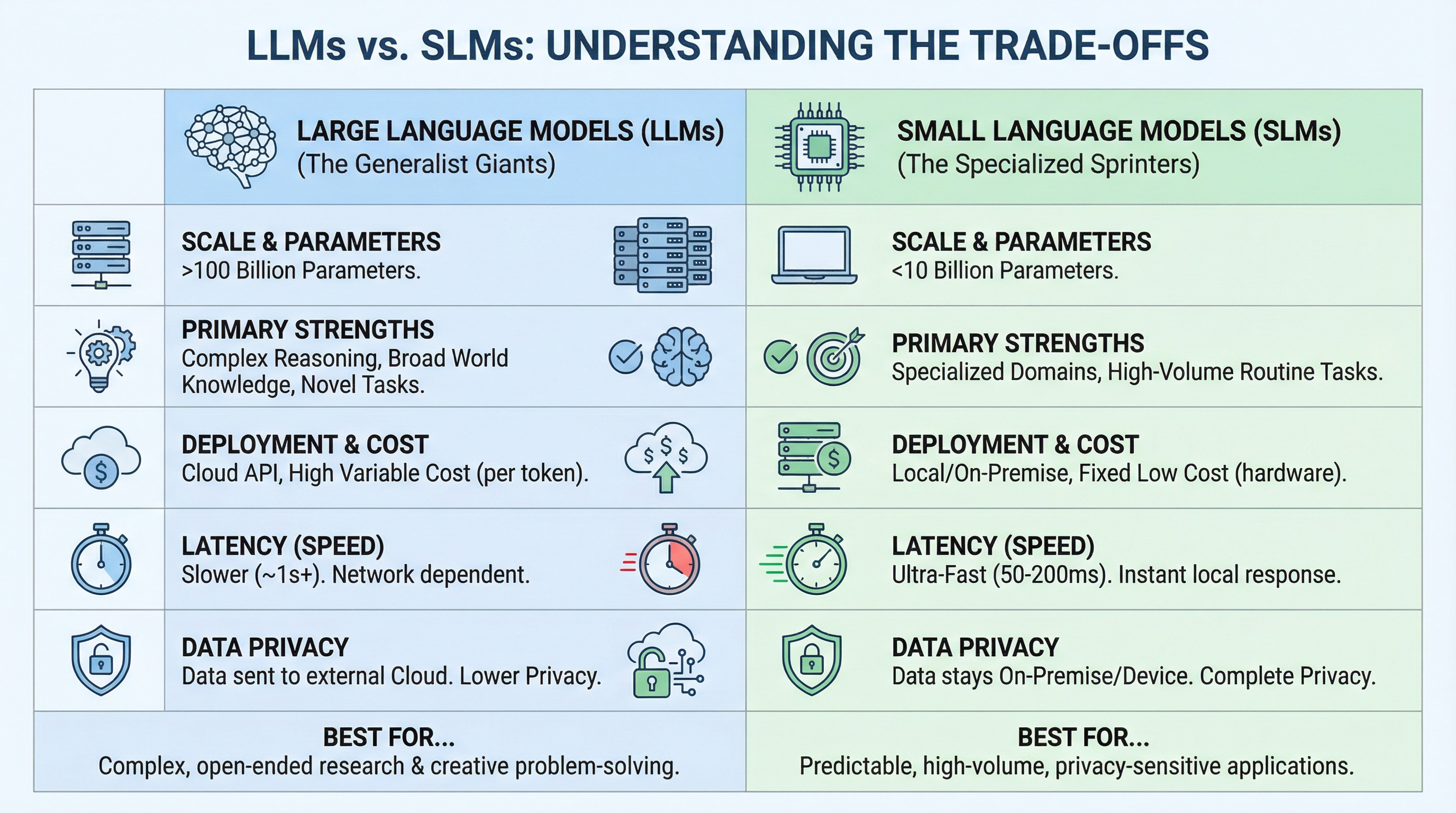

LLMs vs SLMs: Understanding the Commerce-offs

The choice between an LLM and an SLM depends upon matching functionality to necessities. The variations come all the way down to scale, deployment mannequin, and the character of the duty.

The comparability reveals a sample: LLMs are designed for breadth and unpredictability, whereas SLMs are constructed for depth and repetition. In case your process requires dealing with any query about any matter, you want an LLM’s broad data. However if you happen to’re fixing the identical sort of drawback 1000’s of occasions, an SLM fine-tuned for that particular area might be quicker, cheaper, and sometimes extra correct.

Right here’s a concrete instance. In the event you’re constructing a authorized doc analyzer, an LLM can deal with any authorized query from company regulation to worldwide treaties. However if you happen to’re solely processing employment contracts, a fine-tuned 7B mannequin might be quicker, cheaper, and extra correct on that particular process.

Most groups are touchdown on a hybrid method: use SLMs for 80% of queries (the predictable ones), escalate to LLMs for the advanced 20%. This “router” sample combines the perfect of each worlds.

How SLMs Obtain Their Edge

SLMs aren’t simply “small LLMs.” They use particular methods to ship excessive efficiency at low parameter counts.

Information Distillation trains smaller “scholar” fashions to imitate bigger “trainer” fashions. The scholar learns to copy the trainer’s outputs with no need the identical large structure. Microsoft’s Phi-3 sequence was distilled from a lot bigger fashions, retaining 90%+ of the aptitude at 5% of the dimensions.

Excessive-High quality Coaching Knowledge issues extra for SLMs than sheer knowledge amount. Whereas LLMs are educated on trillions of tokens from your entire web, SLMs profit from curated, high-quality datasets. Phi-3 was educated on “textbook-quality” artificial knowledge, rigorously filtered to take away noise and redundancy.

Quantization compresses mannequin weights from 16-bit or 32-bit floating level to 4-bit or 8-bit integers. A 7B parameter mannequin in 16-bit precision requires 14GB of reminiscence. Quantized to 4-bit, it suits in 3.5GB (sufficiently small to run on a laptop computer). Trendy quantization methods like GGUF keep 95%+ of mannequin high quality whereas reaching 75% measurement discount.

Architectural Optimizations like sparse consideration cut back computational overhead. As a substitute of each token attending to each different token, fashions use methods like sliding-window consideration or grouped-query consideration to focus computation the place it issues most.

Manufacturing Use Circumstances

SLMs are already operating manufacturing techniques throughout industries.

Buyer Assist: A serious e-commerce platform changed GPT-3.5 API calls with a fine-tuned Mistral 7B for tier-1 assist queries. They noticed a 90% price discount, 3× quicker response occasions, and equal or higher accuracy on frequent questions. Complicated queries nonetheless escalate to GPT-4, however 75% of tickets are dealt with by the SLM.

Code Help: Growth groups run Llama 3.2 3B domestically for code completion and easy refactoring. Builders get instantaneous ideas with out sending proprietary code to exterior APIs. The mannequin was fine-tuned on the corporate’s codebase, so it understands inside patterns and libraries.

Doc Processing: A healthcare supplier makes use of Phi-3 Mini to extract structured knowledge from medical information. The mannequin runs on-premise, HIPAA-compliant, processing 1000’s of paperwork per hour on normal server {hardware}. Beforehand, they prevented AI fully on account of privateness constraints.

Cellular Purposes: Translation apps now embed 1B parameter fashions instantly within the app. Customers get instantaneous translations with out web connectivity. Battery life is best than cloud API calls, and translations work on flights or in distant areas.

When to not use SLMs: Open-ended analysis questions, creative writing requiring novelty, duties needing broad data, or advanced multi-step reasoning. An SLM gained’t write a novel screenplay or remedy novel physics issues. However for well-defined, repeated duties, they’re excellent.

Getting Began with SLMs

In the event you’re new to SLMs, begin right here.

Run a fast check. Set up Ollama and run Llama 3.2 3B or Phi-3 Mini in your laptop computer. Spend a day testing it in your precise use circumstances. You’ll instantly perceive the velocity distinction and functionality boundaries.

Establish your use case. Take a look at your AI workloads. What share are predictable, repeated duties versus novel queries? If greater than 50% are predictable, you could have a robust SLM candidate.

Fantastic-tune if wanted. Gather 500 to 1,000 examples of your particular process. Fantastic-tuning takes hours, not days, and the efficiency enchancment may be important. Instruments like Hugging Face’s Transformers library and platforms like Google Colab make this accessible to builders with fundamental Python abilities.

Deploy domestically or on-premise. Begin with a single GPU server or perhaps a beefy laptop computer. Monitor price, latency, and high quality. Evaluate towards your present cloud API spend. Most groups discover ROI inside the first month.

Scale with a hybrid method. When you’ve confirmed the idea, add a router that sends easy queries to your SLM and sophisticated ones to a cloud LLM. This works properly for each price and functionality.

Key Takeaways

The pattern in AI isn’t simply “greater fashions.” It’s smarter deployment. As SLM architectures enhance and quantization methods advance, the hole between small and huge fashions narrows for specialised duties.

In 2026, profitable AI deployments aren’t measured by which mannequin you utilize. They’re measured by how properly you match fashions to duties. SLMs provide you with that flexibility: the power to deploy succesful AI the place you want it, on {hardware} you management, at prices that scale with what you are promoting.

For many manufacturing workloads, the query isn’t whether or not to make use of SLMs. It’s which duties to start out with first.