As firms of varied sizes undertake graphic processing models (GPU)-based machine studying (ML) coaching, fine-tuning and inference workloads, tech-trends/2025/tech-trends-ai-hardware-and-computation-leading-ai-revolution.html” goal=”_blank” rel=”noopener noreferrer”>the demand for GPU capability has outpaced industry-wide provide. This imbalance has made GPUs a scarce resource, making a problem for purchasers who want dependable entry to GPU compute sources for his or her ML workloads.

If you encounter GPU capability limitations, you may take into account creating on-demand capacity reservations (ODCRs). ODCRs apply to deliberate, steady-state workloads with well-understood utilization patterns. Quick-term ODCR availability for GPU cases, significantly P-type cases, is usually restricted. Moreover, and not using a long-term contract, ODCRs are billed at on-demand charges, providing no price benefit. This makes ODCRs unsuitable for brief or exploratory workloads corresponding to testing, evaluations, or occasions. A guided strategy to safe short-term GPU capability turns into needed.

On this put up, you’ll learn to safe reserved GPU capability for short-term workloads utilizing Amazon Elastic Compute Cloud (Amazon EC2) Capacity Blocks for ML and Amazon SageMaker training plans. These options can tackle GPU availability challenges if you want short-term capability for load testing, mannequin validation, time-bound workshops, or getting ready inference capability forward of a launch.

Resolution overview and short-term GPU choices

There are a number of methods to entry GPU capability on AWS for short-term workloads:

On-demand GPU cases

On-demand cases are often the primary possibility for short-term GPU utilization. If capability is out there at launch time, you can begin utilizing GPU cases instantly with out prior dedication. This works properly for advert hoc experiments, brief assessments and growth duties.

On-demand GPU capability will depend on regional provide and present demand, and availability can change rapidly. If you happen to cease or scale down an occasion, you may not be capable of reacquire the identical capability when wanted once more. This uncertainty usually results in conserving GPU cases working longer than wanted, which might improve price. Select on-demand cases when your workload can tolerate potential launch delays or when timing is versatile.

Spot GPU cases

Spot instances can scale back your GPU compute prices by up to 90%, however they commerce price saving for availability certainty. Spot capability will depend on unused capability within the AWS Area. Instances can be interrupted when Amazon EC2 wants the capability again, thus spot cases are appropriate just for workloads that may deal with interruption.

For ML workloads, spot cases work properly when you’ll be able to checkpoint progress and restart. Really helpful use instances embrace distributed coaching jobs with periodic checkpoints, batch inference workloads that may be retried, and workshop environments which are designed to tolerate partial capability.

Amazon EC2 Capability Blocks for ML

Amazon EC2 Capacity Blocks for ML reserves GPU capability for a selected time window in order that the requested cases will likely be accessible if you launch them in the course of the reserved interval. In contrast to ODCRs, Capability Blocks are absolutely self-service and provide higher short-term availability for GPU cases with a 40-50% discounted price. Every Capability Block represents a reservation of a selected variety of a specific occasion sort for an outlined period. You’ll be able to:

Capability Blocks apply to workloads that run immediately on Amazon EC2, the place you handle the working system, networking, and orchestration layers your self.

Service degree settlement (SLA) and {hardware} failures: If {hardware} fails throughout your reservation, you’ll be able to terminate the affected occasion and manually launch a alternative into the identical Capability Blocks reservation. The system returns the reserved capability slot to your reservation after roughly 10 minutes of cleanup. Amazon EC2 maintains a buffer inside every Capability Block to assist relaunching cases in case of {hardware} degradation, at no extra price.

Word: Capability Blocks have the next limitations:

Amazon SageMaker coaching plans

Amazon SageMaker training plans present entry to order GPU capability for ML workloads within the Amazon SageMaker AI managed surroundings, corresponding to coaching jobs, Amazon SageMaker HyperPod clusters and inference. SageMaker coaching plans aren’t interchangeable with EC2 Capability Blocks. With SageMaker coaching plans, you’ll be able to:

- Schedule reservations for particular GPU-based cases and durations.

- Entry your capability with out managing underlying infrastructure.

- Use a variety of accelerated computing choices, together with the most recent NVIDIA GPUs and AWS Trainium accelerators.

Word that G-type cases (besides G6 cases) aren’t presently supported by SageMaker coaching plans. If you happen to want G6 cases, contact your AWS account crew. For detailed details about the supported occasion sorts in a given AWS Area, period, and amount choices, see Supported instance types, AWS Regions, and pricing.

Amazon SageMaker coaching plans apply to:

Select this selection if you need Amazon SageMaker AI to handle occasion provisioning, scaling, and lifecycle whereas nonetheless securing reserved capability throughout an outlined window.

Choice framework: choosing the proper possibility

When planning your short-term GPU technique, it is best to consider choices based mostly on three key components:

- Availability: From on-demand to reserved capability.

- Value mannequin: On-demand pricing or upfront commitments with lower than on-demand pricing.

- Workload surroundings: Amazon EC2 direct entry in comparison with Amazon SageMaker-managed workloads.

- From short-term to long-term capability planning: Whereas this put up focuses on securing short-term GPU capability, you may have to plan for longer-term or recurring workloads. You’ll be able to run assessments based mostly on historic information; or use short-term GPU sources to load check your workload and achieve higher understanding of the occasion quantity and sort wanted for manufacturing. For manufacturing deployments or large-scale occasions requiring important GPU capability, begin planning a minimum of three weeks prematurely. Work along with your AWS account crew to evaluate your necessities and develop a capability technique that meets your timeline.

Value consideration

- Capability Blocks for ML require upfront cost and provide 40-50% decrease hourly charges in comparison with on-demand pricing. For instance in US East (N. Virginia), p5.48xlarge costs $34.608/hour with Capacity Blocks versus $55.04/hour on-demand.

- SageMaker coaching plans are priced 70-75% below on-demand rates. You pay the worth up entrance on the time you schedule the reservation. AWS often updates costs based mostly on tendencies in provide and demand. You pay the speed that’s present on the time that you just make the reservation, even when the coaching plan begins later after the worth adjustments.

- In case your cases don’t run repeatedly all through the reservation interval, the overall price of constructing reservations may exceed on-demand price. Consider based mostly in your workload’s precise runtime wants.

- Disclaimer: All pricing figures referenced on this part are based mostly on publicly accessible AWS pricing as of the date of publication and are topic to alter. For essentially the most present pricing, consult with Amazon EC2 pricing and SageMaker AI pricing.

Choice course of

Begin with the least restrictive possibility and transfer towards reserved capability when availability or timing turns into crucial.

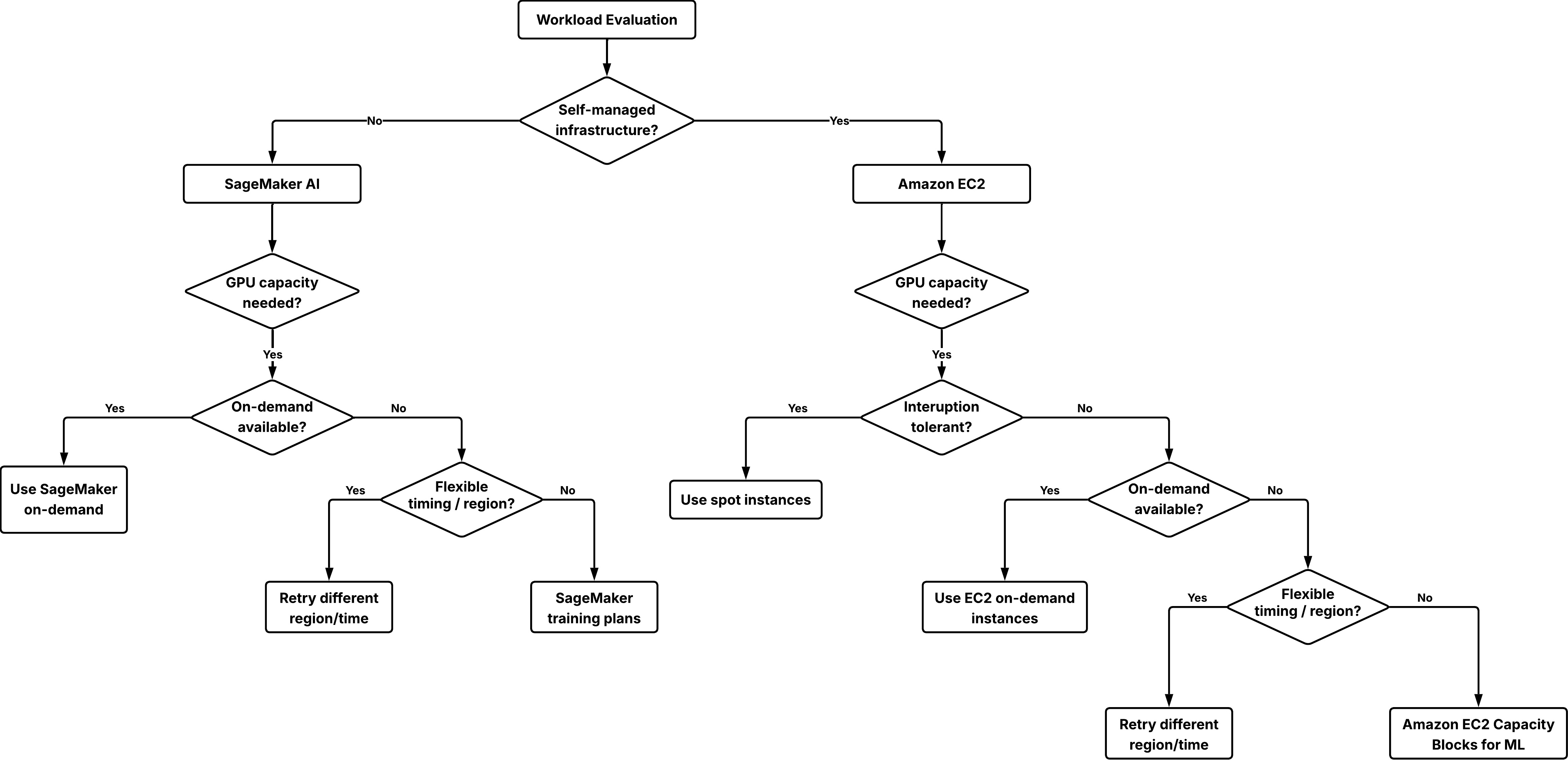

Choice tree to decide on the appropriate possibility for securing GPU capability.

Step 1: Decide your infrastructure administration mannequin

- If you happen to want full management over the working system, networking, and orchestration, use Amazon EC2 and use on-demand cases, spot cases, or Capability Blocks.

- If you need a managed service that handles infrastructure provisioning and operations for you, use Amazon SageMaker AI and use SageMaker on-demand or SageMaker coaching plans for ml.* occasion sorts.

Step 2: Attempt on-demand capability first

For each Amazon EC2 and Amazon SageMaker AI workloads, begin with on-demand capability. This strategy:

- Requires no prior dedication.

- Permits rapid begin if capability is out there.

If an preliminary launch fails, attempt these flexibility choices:

- Attempt a special AWS Area the place capability could be accessible.

- Alter the beginning time to off-hours when demand is usually decrease.

- Use spot cases as a complement on workloads that may tolerate interruption.

Step 3: Use reserved capability when certainty is required

In case your workload should begin at a selected time or your supply timeline will depend on reserved GPU entry, reserving capability turns into the suitable selection:

- For Amazon EC2 workloads, use Capability Blocks for ML.

- For Amazon SageMaker AI workloads, use Amazon SageMaker coaching plans for both coaching jobs, HyperPod clusters, or inference workloads.

Technical implementation: Reserving GPU capability for inference with SageMaker coaching plans

This part reveals you easy methods to reserve and use GPU capability for inference workloads managed by Amazon SageMaker coaching plans. Word that SageMaker coaching plans reservations are particular to the chosen goal useful resource. A plan bought for inference can’t be used for Coaching Jobs or HyperPod clusters, or the reverse.

For different situations:

Stipulations

Earlier than you start, verify that you’ve:

{

"Model": "2012-10-17",

"Assertion": [

{

"Effect": "Allow",

"Action": [

"sagemaker:CreateEndpointConfig",

"sagemaker:CreateEndpoint",

"sagemaker:DescribeEndpoint",

"sagemaker:DeleteEndpoint",

"sagemaker:DeleteEndpointConfig"

],

"Useful resource": [

"arn:aws:sagemaker:*:*:endpoint/*",

"arn:aws:sagemaker:*:*:endpoint-config/*"

]

}

]

}Create a coaching plan

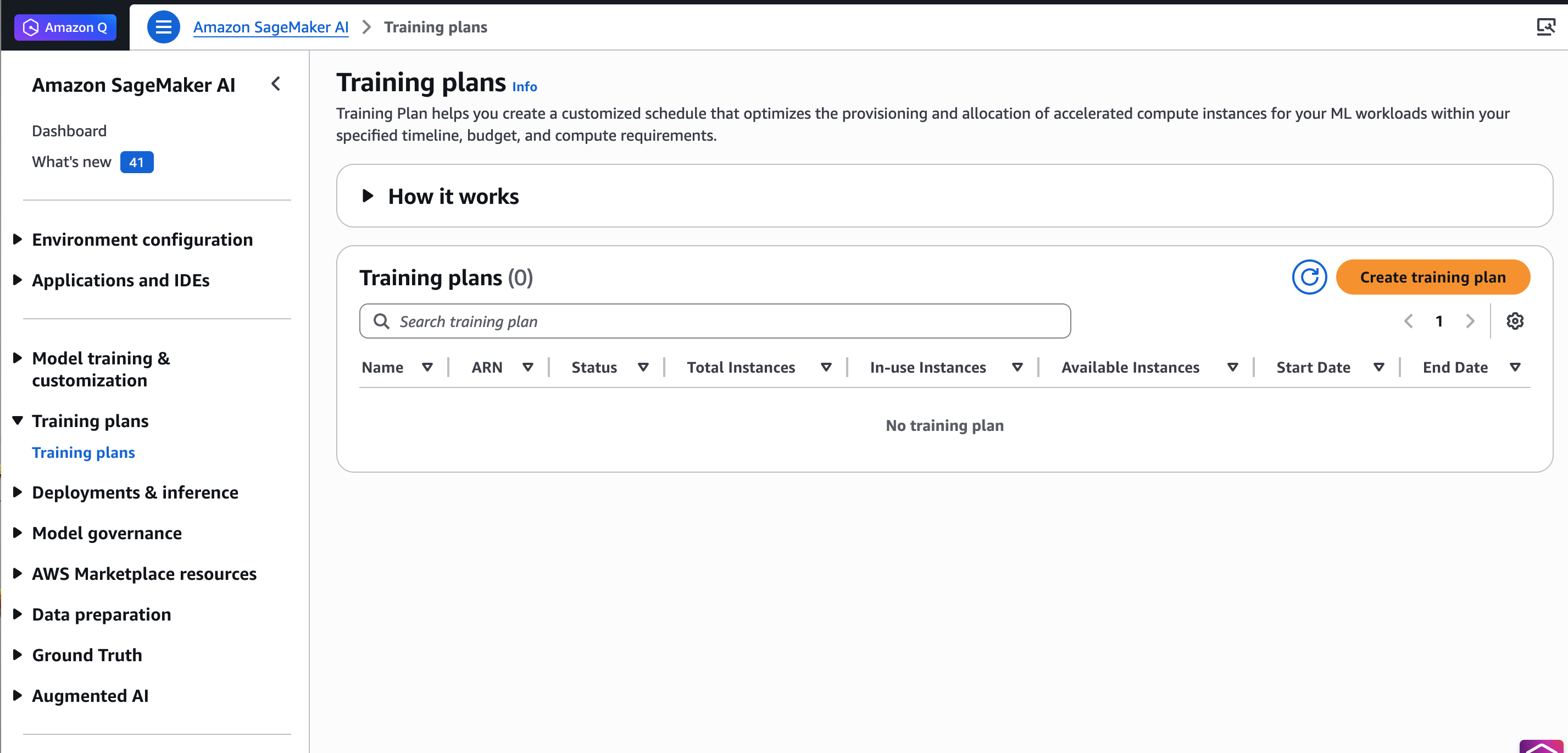

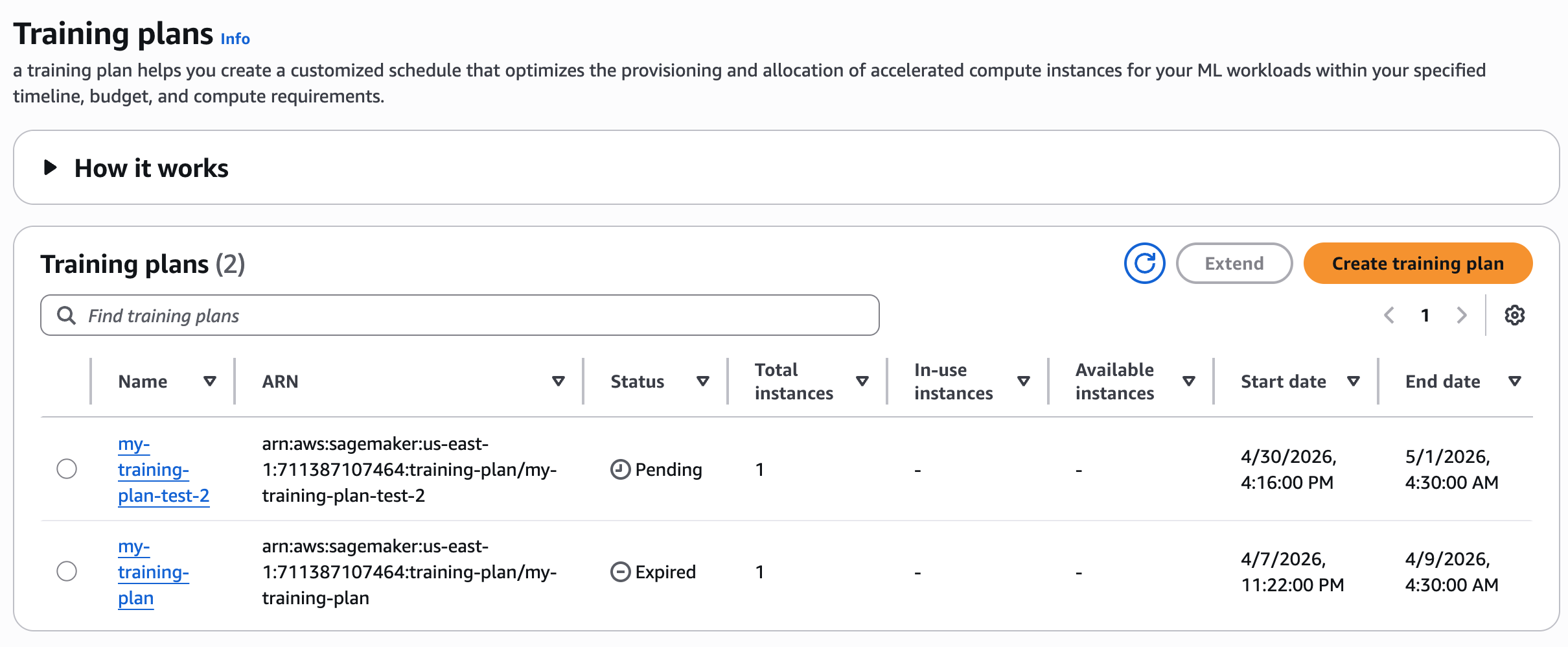

To get began, go to the Amazon SageMaker AI console, select Coaching plans within the left navigation pane, and select Create coaching plan.

The Coaching plans web page within the Amazon SageMaker AI console.

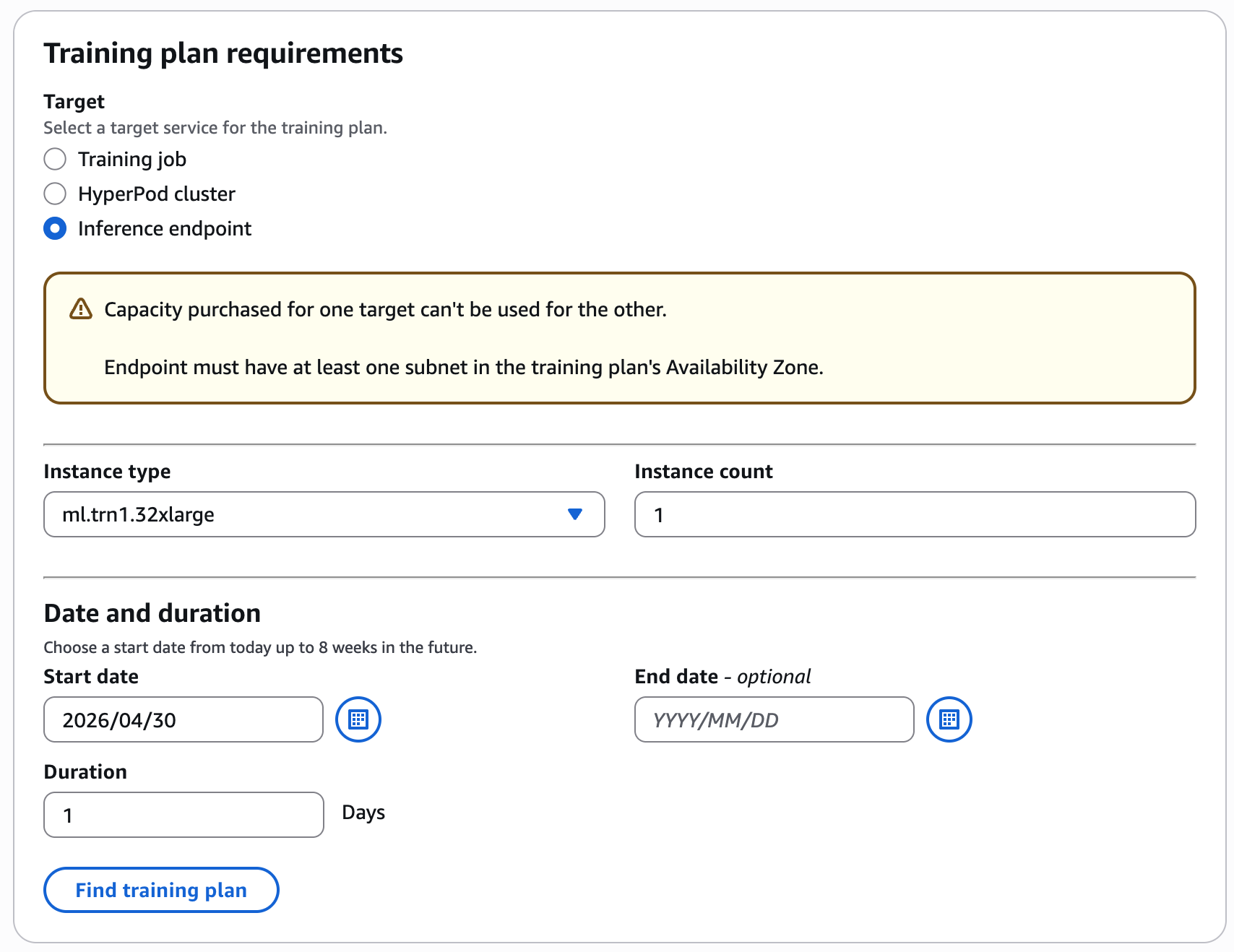

For instance, select your most popular coaching date and period (1 day), occasion sort and depend (1 ml.trn1.32xlarge) for Inference Endpoint, and select Discover coaching plan.

Configure your coaching plan by choosing the occasion sort, occasion depend, date and period on your inference workload.

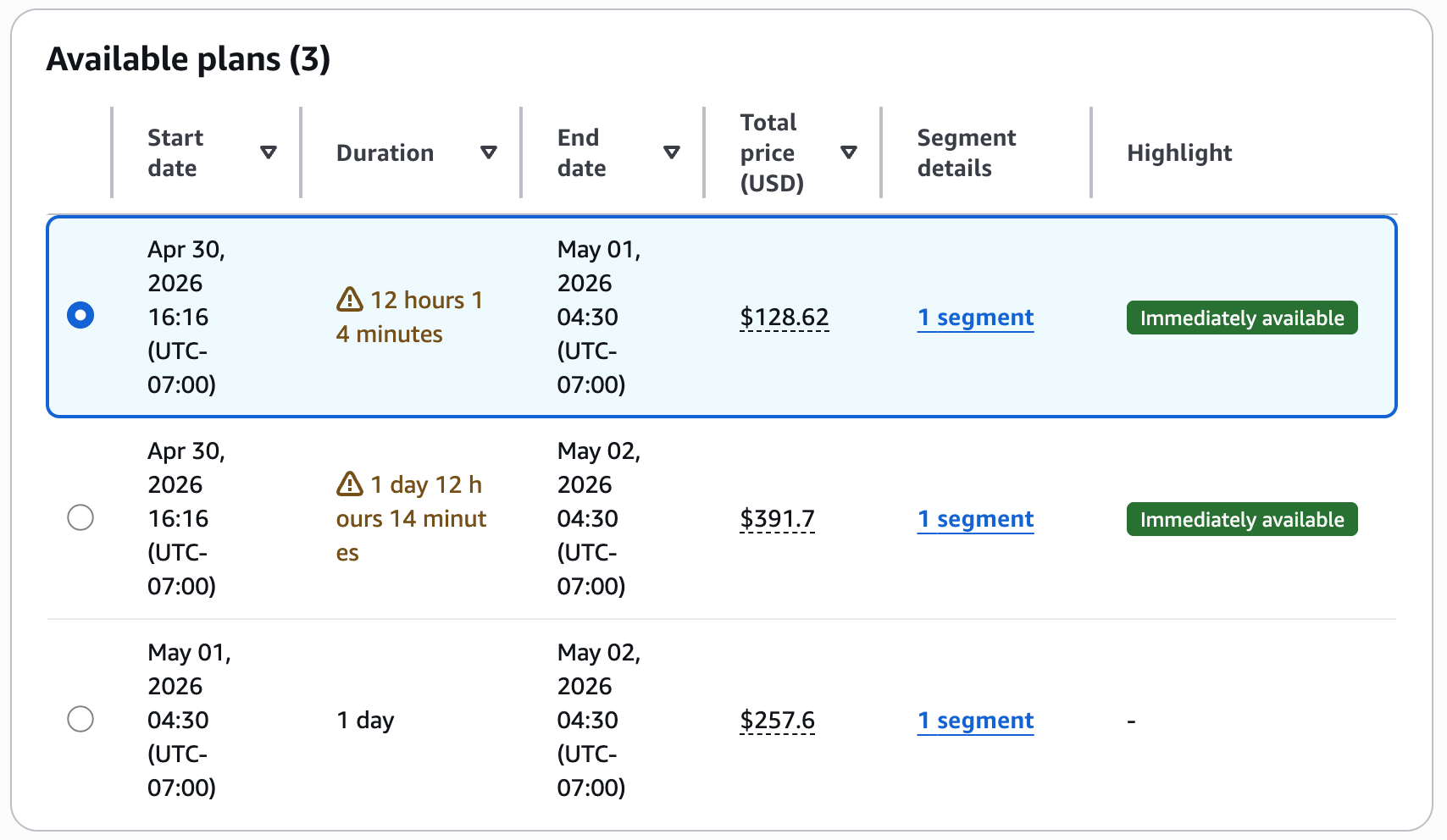

The console shows accessible plans with the overall value.

Evaluation the advised plans with upfront pricing earlier than accepting the reservation.

If you happen to settle for this coaching plan, add your coaching particulars within the subsequent step and select Create your plan.

Word: SageMaker coaching plans can’t be canceled after buy. The reservation will expire robotically on the finish of the reserved interval.

To observe coaching plan standing

Evaluation your coaching plan standing within the console.

After creating your coaching plan, you’ll be able to see the record of coaching plans. The plan initially enters a Pending state, awaiting cost. You pay the complete value of a coaching plan up entrance. After AWS completes cost processing, the plan will transition to the Scheduled state. On the plan’s begin date, it turns into Lively, and the system allocates sources on your use.

To confirm coaching plan standing with AWS CLI

Use the next command to verify the coaching plan standing:

aws sagemaker describe-training-plan

--training-plan-name your-training-plan-name

--region your-regionWhen the response reveals "Standing": "Lively", you can begin working your inference duties. Confirm that the TargetResources subject reveals endpoint to verify the plan is configured for inference workloads.

To create endpoint configuration

Use the next command to generate an endpoint configuration that makes use of the coaching plan sources:

aws sagemaker create-endpoint-config

--endpoint-config-name your-endpoint-config-name

--production-variants '[

{

"VariantName": "your-variant-name",

"ModelName": "your-model-name",

"InitialInstanceCount": 1,

"InstanceType": "ml.trn1.32xlarge",

"CapacityReservationConfig": {

"MlReservationArn": "your-training-plan-arn",

"CapacityReservationPreference": "capacity-reservations-only"

}

}

]'To deploy the endpoint

Create your endpoint useful resource by specifying the endpoint configuration from the earlier step:

aws sagemaker create-endpoint

--endpoint-name your-endpoint-name

--endpoint-config-name your-endpoint-config-nameTo confirm endpoint standing

Examine your endpoint standing and coaching plan capability reservation standing:

aws sagemaker describe-endpoint

--endpoint-name your-endpoint-name

--region your-regionClear up sources

To keep away from incurring ongoing expenses, delete the sources that you just created:

Delete the endpoint:

aws sagemaker delete-endpoint --endpoint-name your-endpoint-nameDelete the endpoint configuration:

aws sagemaker delete-endpoint-config --endpoint-config-name your-endpoint-config-nameConclusion

Securing GPU capability for transient workloads requires a special strategy than planning long-term, steady-state utilization. On this put up, you realized easy methods to strategy short-term GPU capability planning by:

- Beginning with on-demand capability and rising flexibility when doable.

- Distinguishing between Amazon EC2–based mostly workloads and Amazon SageMaker AI managed workloads.

- Reserving capability utilizing Capability Blocks or SageMaker coaching plans when availability and certainty are required.

You additionally realized easy methods to use SageMaker coaching plans to order GPU capability forward of time. This functionality helps scale back operational friction when getting ready inference capability for deliberate evaluations, releases, or anticipated visitors will increase.

To study extra, consult with the next sources:

Concerning the authors